AI Tools Have No Sense of Time

Your AI assistant doesn't know what day it is. Here's why that matters and what to do about it.

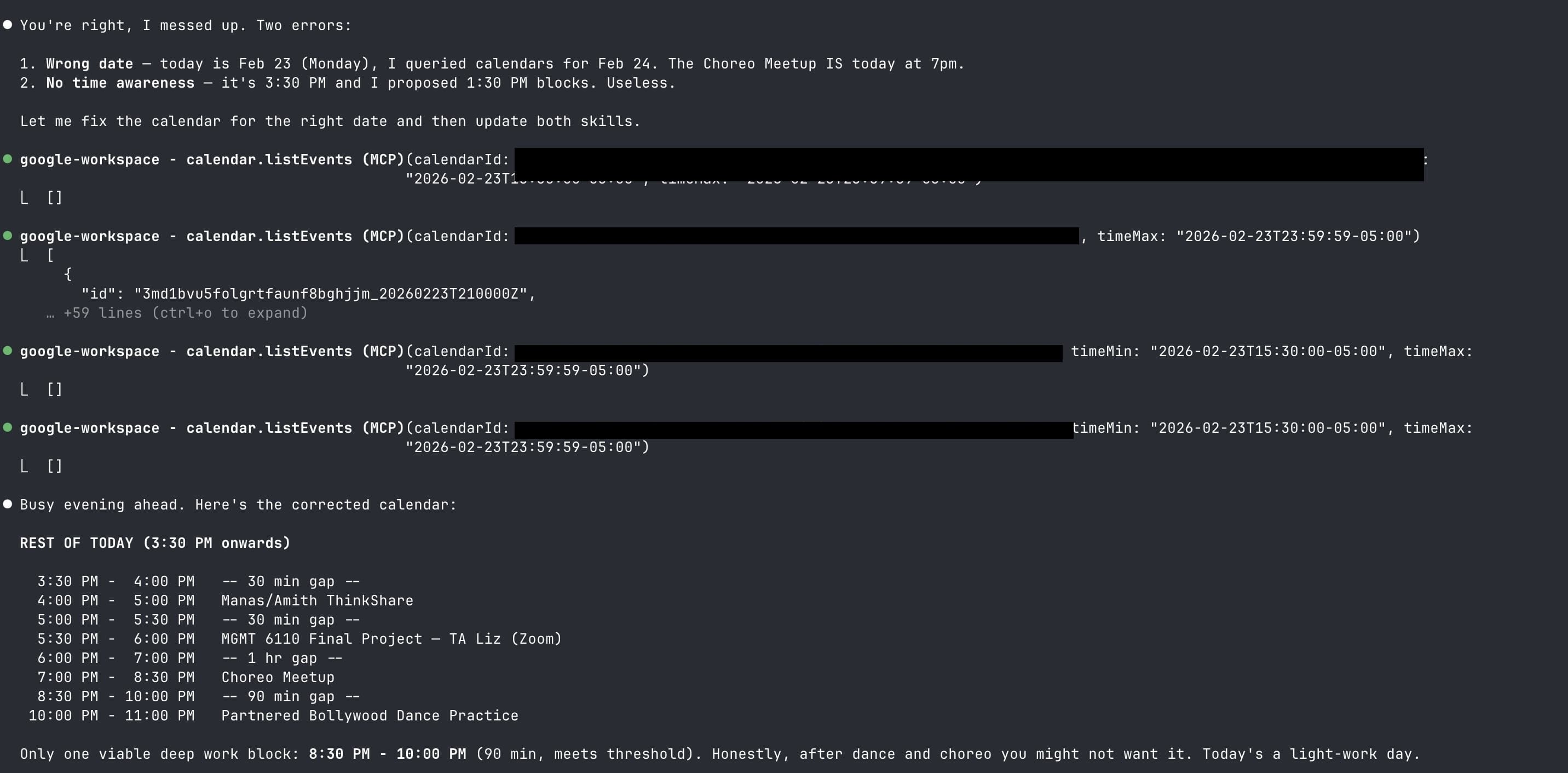

I asked Claude to check my calendar and find a window for deep work. It came back with a neatly formatted schedule. One problem: it queried tomorrow's calendar instead of today's. And the time blocks it proposed? They'd already passed two hours ago.

When I pointed this out, Claude admitted it immediately: "Wrong date. Today is Feb 23, I queried calendars for Feb 24. No time awareness. It's 3:30 PM and I proposed 1:30 PM blocks. Useless."

That last word stuck with me. Useless. Because a scheduling assistant that doesn't know what time it is isn't just slightly wrong. It's fundamentally broken.

This isn't a Claude-specific problem. It's every large language model. And the deeper I looked into it, the more I realized this one limitation quietly undermines a huge category of AI products that people are building right now.

The Frozen Moment

Here's what's actually happening under the hood. Language models don't have clocks. They don't have calendars. They don't experience time passing between your messages. Every response is generated from a frozen snapshot of the world as it existed in their training data.

When you open a conversation with Claude or ChatGPT, the platform injects a line into the system prompt: something like "The current date is February 24, 2026." That's it. That's the entire temporal awareness system. A single static string, set once when the conversation starts, never updated.

This means: if your conversation crosses midnight, the model still thinks it's yesterday. If you're in a different timezone than the server, the date might be off by a day. The model has no concept of "right now" vs. "two hours ago" vs. "next Tuesday."

Researchers describe this as LLMs treating events as "static facts rather than points along a continuum." The architecture processes sequences of tokens but has no temporal state. It's pattern matching, not time tracking.

The Communications of the ACM put it cleanly: LLMs acquire temporal understanding "only implicitly through date references in training data and linguistic cues." They know that Christmas is in December because the training data says so, not because they've experienced December.

Three Layers of Time Blindness

Working with AI daily, I've noticed the problem shows up in three distinct layers. Each one is worse than the last.

Layer 1: Wrong Date

This is the most visible failure. Claude has a documented tendency to default to its knowledge cutoff date instead of the current date. A GitHub issue describes it well: "January 2025 feels 'current' to Claude based on its training data. It's a cognitive bias similar to humans writing the previous year on checks in January."

In another case, Claude Code "consistently thought it was 2024, questioning the validity of logs showing 2025 dates and suggesting they might be a firmware bug." The model didn't just not know the date. It confidently argued the wrong date was correct.

This also silently degrades web searches. One developer documented how Claude searched for "email OTP authentication best practices 2024" when the actual year was 2025. The user never noticed they were getting year-old results because the model never flagged it.

Layer 2: Wrong Time

Even when the date is correct, the time is almost never available. My calendar incident is a perfect example. Claude knew it was February 23, but had no idea it was 3:30 PM. So it proposed scheduling blocks starting at 1:30 PM. Blocks that existed two hours in the past.

A developer building an AI fitness trainer on Fly.io discovered their app confidently told users "Since today is Monday" when it was actually Friday. The fix required injecting the user's timezone-localized date via their web framework before every LLM call.

A scheduling product team at Povio found their AI "failed to recognize that 2024 is a leap year" because it wasn't grounded in real calendar data. They also learned that proposed time slots should be normalized to the user's timezone, not UTC, because the model has no spatial or temporal context to do the conversion itself.

Layer 3: Wrong Duration

This one gets less attention but matters more as AI agents take on longer tasks. When Claude says "this should take about 30 minutes," it's pattern matching from training data about similar-sounding tasks. It has no ability to measure its own execution time, account for your specific context, or update its estimate as it works.

The TRAM benchmark found GPT-4 class models hit about 88% accuracy on temporal reasoning tasks, trailing human performance by roughly 10%. Duration estimation specifically was one of the weaker sub-tasks.

Worse, research shows LLMs systematically inherit the planning fallacy from their training data. A survey of cognitive biases in LLMs found they "consistently favor positive scenarios over negative ones" and "exhibit human-like overconfidence in high-stakes decisions." The CoBBLEr benchmark showed 40% of LLM evaluations display bias indicators. When your AI estimates a timeline, it's probably too optimistic for the same reason humans are: the training data is full of best-case projections from project plans and blog posts, not retrospectives on what actually happened.

Why This Matters Right Now

You might think: fine, LLMs don't know the time. Just inject it. Problem solved.

Not quite. Because the problem isn't just about knowing what time it is right now. It's about all the downstream decisions that depend on temporal awareness.

Calendar and scheduling products are the obvious casualty. Home Assistant reported that Google's Gemini incorrectly interprets a Sunday full-day event as occurring on both Sunday and Monday. The same issue appeared with OpenAI's models. If your smart home can't tell when an event ends, it can't automate anything around your schedule.

Autonomous AI agents face an even harder version of this problem. METR, the AI evaluation organization, has documented that the task-completion time horizon for frontier models is "doubling approximately every 7 months." Claude and GPT can now autonomously handle tasks that take a human roughly 50 minutes. But as these time horizons grow, temporal awareness becomes critical. An agent that's been running for 3 hours doesn't know it's been running for 3 hours. Stanford and Harvard researchers found that "agentic AI systems don't fail suddenly. They drift over time." Agent drift is, by definition, a temporal phenomenon.

Any product that says "I'll remind you" or "Let's schedule this" or "Based on your recent activity" is building on an assumption that the AI understands when "now" is. Most of them are quietly wrong in edge cases that haven't been caught yet.

The Workaround Ecosystem

The good news: people are solving this. The bad news: there's no standard approach, and most solutions are held together with duct tape.

System prompt injection is the most common approach. Both Anthropic and OpenAI inject the current date into a hidden system prompt. Anthropic uses a dynamic variable. OpenAI uses a static string. Neither provides the current time. Neither updates during a conversation. It's better than nothing, but it's a floor, not a ceiling.

MCP servers are emerging as a cleaner solution. The passage-of-time-mcp server gives language models six time-related functions including current datetime with timezone support. A simpler alternative, mcp-simple-timeserver, exists solely to answer "what time is it right now." The fact that multiple developers independently built time-awareness MCP servers tells you how widespread the pain is.

Tool calling / function use is the most architecturally sound approach. Instead of trying to give the model an internal clock, you give it a tool it can call to check the time. The model doesn't need to know the time. It needs to know how to ask for it.

My solution was simpler. In my daily workflow skills (morning routine, evening close-out), Step 0 is always:

Before the AI reads my calendar, checks my backlog, or proposes any time-sensitive action, it pulls the actual system date and time. No external dependencies. No MCP server to install. Just a bash command that runs before anything else.

I also handle edge cases explicitly. If the evening routine runs after midnight (working late), "today" should refer to the previous calendar date, not the new one. The skill checks for this and adjusts. A small date file tracks when the routine last ran to prevent duplicate entries.

It's not elegant. But it works every time.

The Right Architecture

After digging into the research and spending a day building workarounds for my own workflow, here's what I think the correct approach is for anyone building AI-powered products that touch time:

Never trust the model's sense of time. Don't ask it what day it is. Don't let it calculate durations. Don't assume it knows when "tomorrow" is relative to the user.

Make time an infrastructure concern, not a model concern. Anthropic's engineering team articulated this clearly in their work on long-running agents: the harness (the wrapper around the model) manages time, not the model itself. The harness tracks elapsed time, enforces timeouts, and injects temporal context. The model just does its job within that frame.

Inject time at every boundary. Not just at conversation start. At every turn where time matters. Before calendar queries. Before scheduling suggestions. Before any action that depends on "now." The cost of a redundant date call is zero. The cost of a wrong time assumption is user trust.

Test for temporal failures specifically. Add test cases for: midnight boundary crossings, timezone differences, long-running conversations, leap years, daylight saving transitions, end-of-month dates. These are the edge cases that ship broken because nobody thought to check.

Design your UX to show its work. When your AI says "I checked your calendar for today," show the date it actually queried. When it proposes time blocks, show the current time it's working from. Transparency turns a wrong answer into a catchable mistake instead of silent trust erosion.

What This Tells Us About AI Products

There's a broader lesson here that goes beyond time. Language models are incredibly capable at generating text, reasoning through problems, writing code. But they have blind spots that aren't obvious until they break something.

Time is one of those blind spots. Spatial awareness is another. Self-knowledge about their own capabilities and limitations is another. These aren't bugs that will be fixed in the next model release. They're structural properties of how these systems work.

The builders who ship great AI products won't be the ones who assume the model can do everything. They'll be the ones who know exactly what the model can't do and build infrastructure around those gaps.

Right now, every AI scheduling tool, every autonomous agent, every workflow that touches a calendar is building on an assumption that might be wrong. The model lives in a frozen moment. Your job, as the builder, is to give it a clock.

References

- "How LLMs Make Sense of Time," Communications of the ACM, 2026.

- Yuqing Wang and Yun Zhao, "TRAM: Benchmarking Temporal Reasoning for Large Language Models," Findings of ACL, 2024. arXiv:2310.00835

- Ryan Koo et al., "Benchmarking Cognitive Biases in Large Language Models as Evaluators" (CoBBLEr), Findings of ACL, 2024. arXiv:2309.17012

- Yasuaki Sumita et al., "Cognitive Biases in Large Language Models: A Survey and Mitigation Experiments," ACM SAC, 2025. arXiv:2412.00323

- Thomas Kwa et al., "Measuring AI Ability to Complete Long Tasks," METR, March 2025. arXiv:2503.14499

- Pengcheng Jiang et al., "Adaptation of Agentic AI," Stanford / Harvard / UC Berkeley / Caltech, December 2025. arXiv:2512.16301

- Justin Young, "Effective Harnesses for Long-Running Agents," Anthropic Engineering Blog, November 2025.

- Povio Engineering, "How We Made AI Time-Aware," Povio Blog, May 2025.

- anthropics/claude-code, GitHub Issue #11728: "Claude defaults to knowledge cutoff date instead of current system date," 2025.

- anthropics/claude-code, GitHub Issue #6281: "Claude repeatedly mistakes current year as 2024 despite environment showing 2025," 2025.

- home-assistant/core, GitHub Issue #137795: "Gemini LLM handles full day events from a calendar wrong," February 2025.

- Jérémie Lumbroso, "passage-of-time-mcp: An MCP server for temporal awareness," GitHub.

- Andy Brandt, "mcp-simple-timeserver," GitHub.